N or Space for next slide, P for previous slide, F for full screen, M for menu

Lec 6: Code and Data

Boaz Barak (instructor), Salil Vadhan (co-instructor), Juan Perdomo (Head TF).

Team: Aditya Mahadevan, Brian Sapozhnikov (surveys), Chan Kang (extension), Gabe Abrams (Ed Tech), Gabe Montague (NAND web), Jessica Zhu (proof workshops), John Shen, Juan Esteller (NAND implementation), Karl Otness, Katherine Binney, Lisa Lu, Mark Goldstein (clicker expert), Stefan Spataru, Susan Xu, Tara Sowrirajan, Thomas Orton (Advanced Section), Wentong Zhang

Slides will be posted online.

Laptops/phones only on last 3 rows please.

Three Theorems

Theorem 1: For every function \(F:\{0,1\}^n \rightarrow \{0,1\}\) there is an \(O(2^n/n)\) line NAND program computing \(F\).

Theorem 2: There is an \(O(s^2 \log s)\) line NAND program that computes \(EVAL_{n,m,s}:\{0,1\}^{3s\lceil \log(3s+1) \rceil+n} \rightarrow \{0,1\}^m\), where \(EVAL_{n,m,s}(P,x)=P(x)\).

Theorem 3: There exists \(F:\{0,1\}^n \rightarrow \{0,1\}\) that is not in \(Size(2^n/(100n))\).

The counting argument

How many functions are there from \(\{0,1\}^4\) to \(\{0,1\}\)?

a. 16

b. 128

c. 1024

d. 65536

Lemma: \(|\{ f | f:A \rightarrow B \}| = |B|^{|A|}\).

Lower bound via counting

Theorem 3: There exists \(F:\{0,1\}^n \rightarrow \{0,1\}\) that is not in \(Size(2^n/(100n))\).

Proof: We’ll show \(|Size(2^n/(100n))| \lt 2^{2^n}\).

Represent \(s\) line program in \(\leq 10s\log s\) length string.

For \(s=\tfrac{2^n}{100n}\), length \(\leq 10\tfrac{2^n}{100 n}\log(\tfrac{2^n}{100 n})\)

\(\leq \tfrac{2^n}{10 n}n = \tfrac{2^n}{10}\).

\(|Size(2^n/(100n))| \lt 2^{2^n/10}\). QED

Restatement: \(|Size(2^n/(100n))| \leq 2^{0.1 2^n}\).

Corollary: 99.9% of functions require \(\Omega(2^n/n)\) lines.

Fact: Can simulate \(s\)-node neural network by \(s^2\) line NAND program.

Corollary: 99.9% of functions \(F:\{0,1\}^{100 \times 100} \rightarrow \{0,1\}\) require neural network of \(\gt 10^{1000}\) nodes.

Proof: For \(n=10,000\), \(2^n/(100 n) \sim 10^{3000}/10^6\).

Contrast with \(\sim 10^{82}\) atoms in observable universe.

Almost no function on 100 by 100 images is computable by less-than-universe-sized neural network!

Restatement: A random function is uncomputable by less-than-universe-sized neural network with probability \(\geq 0.999\).

Is it all bad?

A random function is uncomputable by less-than-universe-sized neural network with probability \(\geq 0.999\).

Big picture

Questions

- Given algorithm \(A\) and input \(x\), what is \(A(x)\)?

- Given algorithm \(A\), what is its maximum running time on all length \(n\) inputs?

- Given function \(F:\{0,1\}^n \rightarrow \{0,1\}\), what is the best algorithm to compute \(F\)?

Three Theorems

Theorem 1: For every function \(F:\{0,1\}^n \rightarrow \{0,1\}\) there is an \(O(2^n/n)\) line NAND program computing \(F\).

Theorem 2: There is an \(O(s^2 \log s)\) line NAND program that computes \(EVAL_{n,m,s}:\{0,1\}^{3s\lceil \log(3s+1) \rceil+n} \rightarrow \{0,1\}^m\), where \(EVAL_{n,m,s}(P,x)=P(x)\).

Theorem 3: There exists \(F:\{0,1\}^n \rightarrow \{0,1\}\) that is not in \(Size(2^n/(100n))\).

Thm 3: something can’t be done

Thm 1+2: something can be done: algorithms, programming

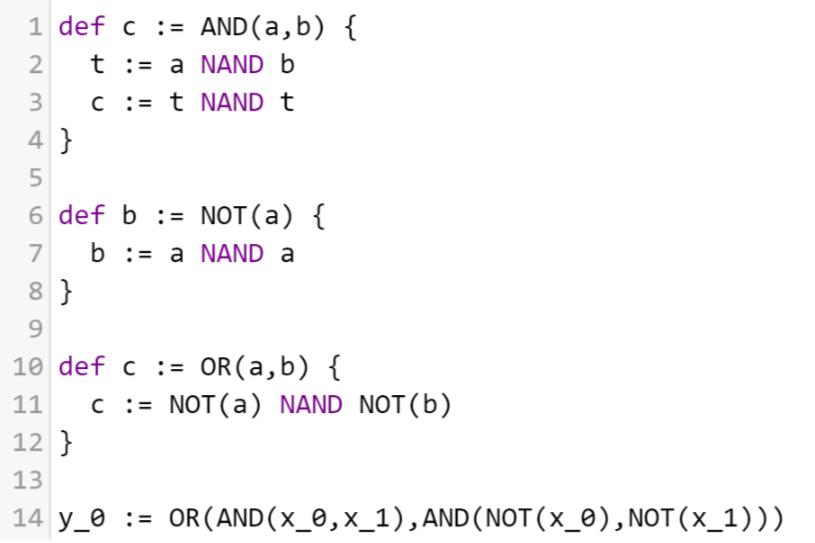

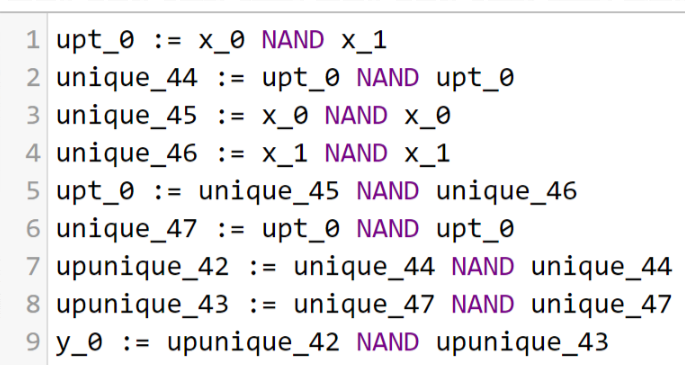

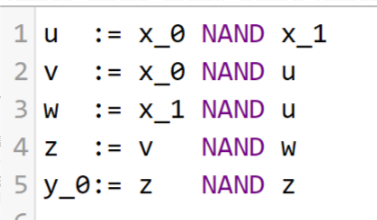

Algorithmic tools for NAND

\(EQUAL_1:\{0,1\}^2 \rightarrow \{0,1\}\)

\(00,11\mapsto 1\) , \(01,10 \mapsto 0\)

In pairs/groups: Find program for \(EQUAL_1\), can test it nandpl.org. Can you do it in 5 lines? Can you do \(EQUAL_{10}\)?

Equality

Equality

Equality

\(EQUAL_1(x_0,x_1) = NOT(XOR_2(x_0,x_1))\)

Thm: \(EQUAL_n \in Size(7n)\)

General NAND techniques

Can “hardwire” constants. E.g. for \(a\in \{0,1\}^n\) compute \(EQUAL_a:\{0,1\}^n \rightarrow \{0,1\}\) that outputs \(1\). Use zero and one.

Data structures: Use \(LOOKUP\), \(EQUALITY\) to implement arrays, lists, etc.. (good enough if you don’t care about the difference between \(O(n)\) and \(O(n^2)\))

Replace finite loops or recursion with copy-paste.

Rule of thumb: If your computer can do it in \(s\) cycles, there is a \(\approx s^2\) line NAND program to do it too.